IROS 2026 Submission

Closed-loop Diffusion Planning over Multi-Modal Distribution for Robot Follow-Ahead

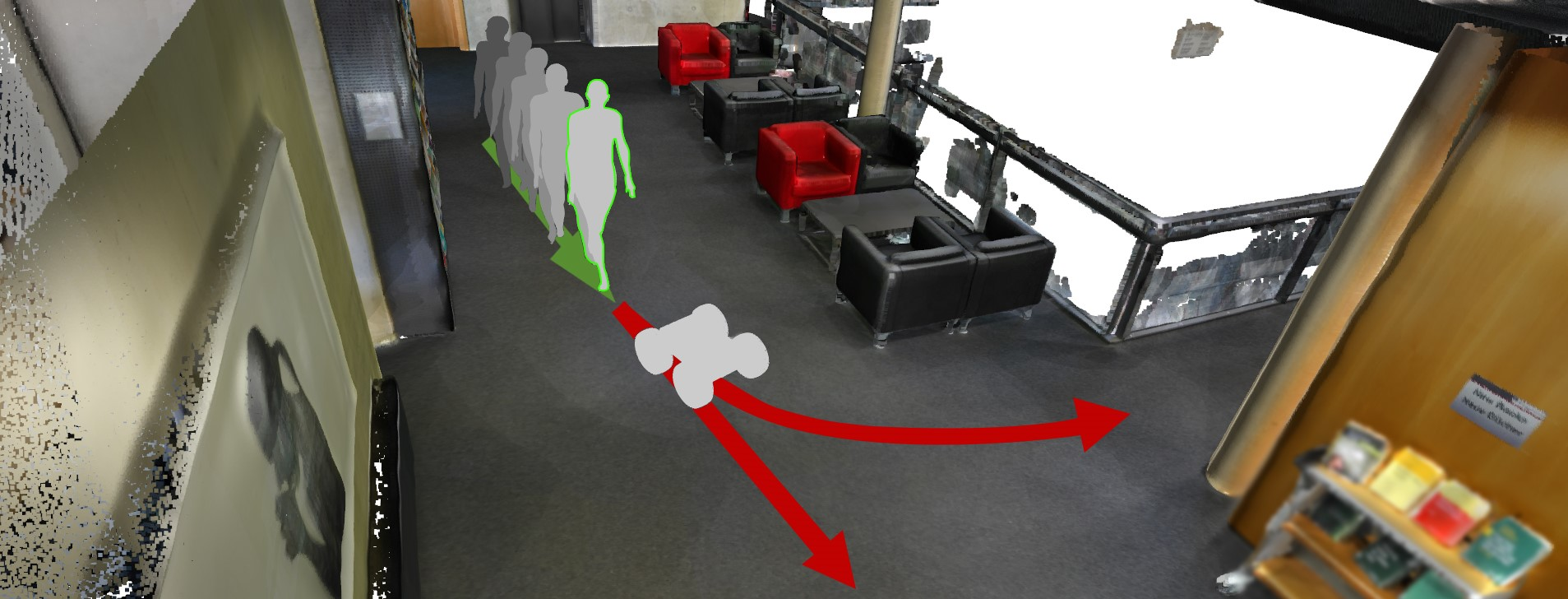

A robot camera operator

Imagine a camera operator walking backward while filming a person. To keep expressive shots, the camera should stay ahead of the actor, not behind. Humans do this naturally: we make quick guesses about what comes next and adapt while moving.

In robot follow-ahead, this “instinct” is exactly the hard part. The robot must move first, before the person fully commits to a direction.

One future is not enough

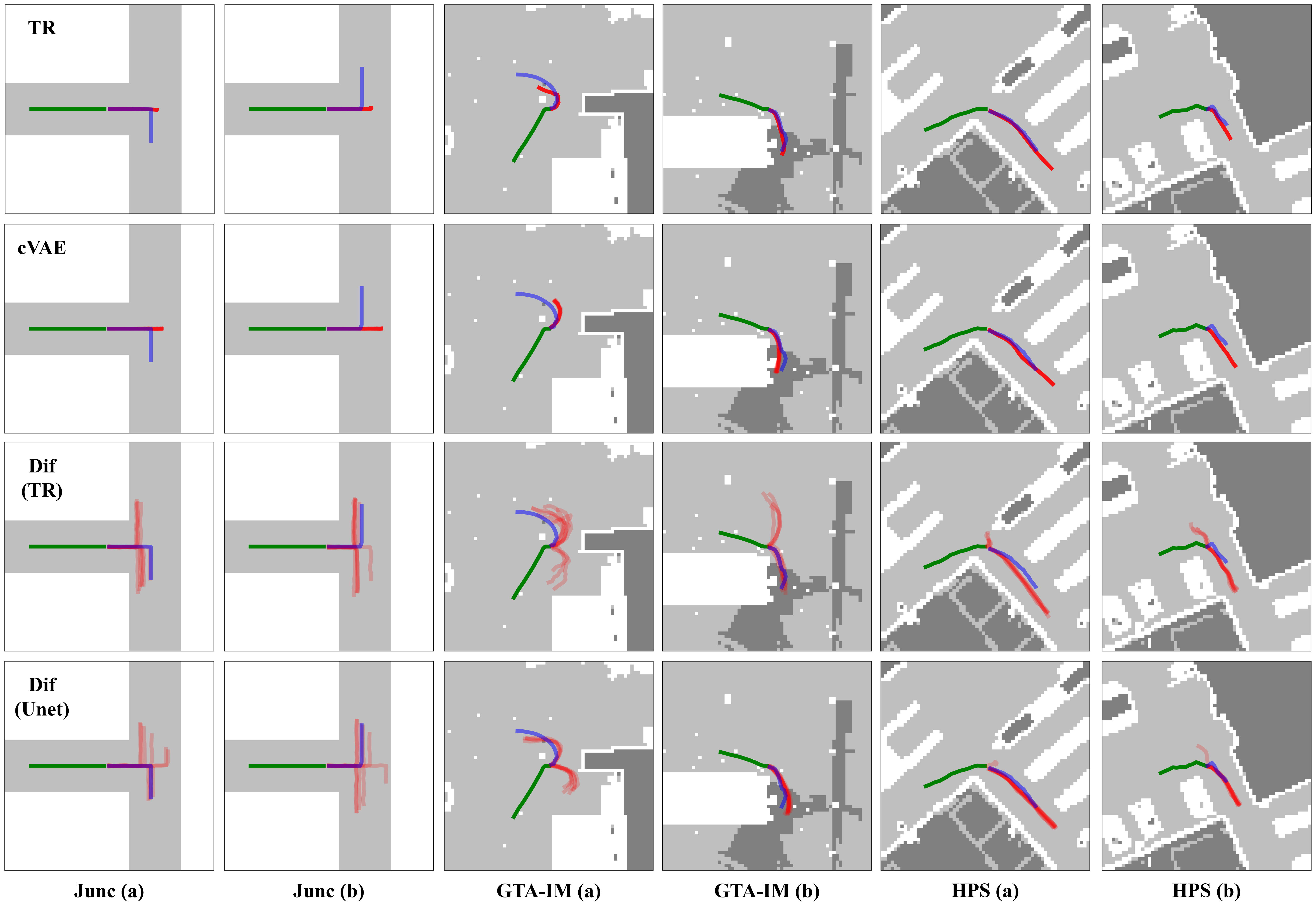

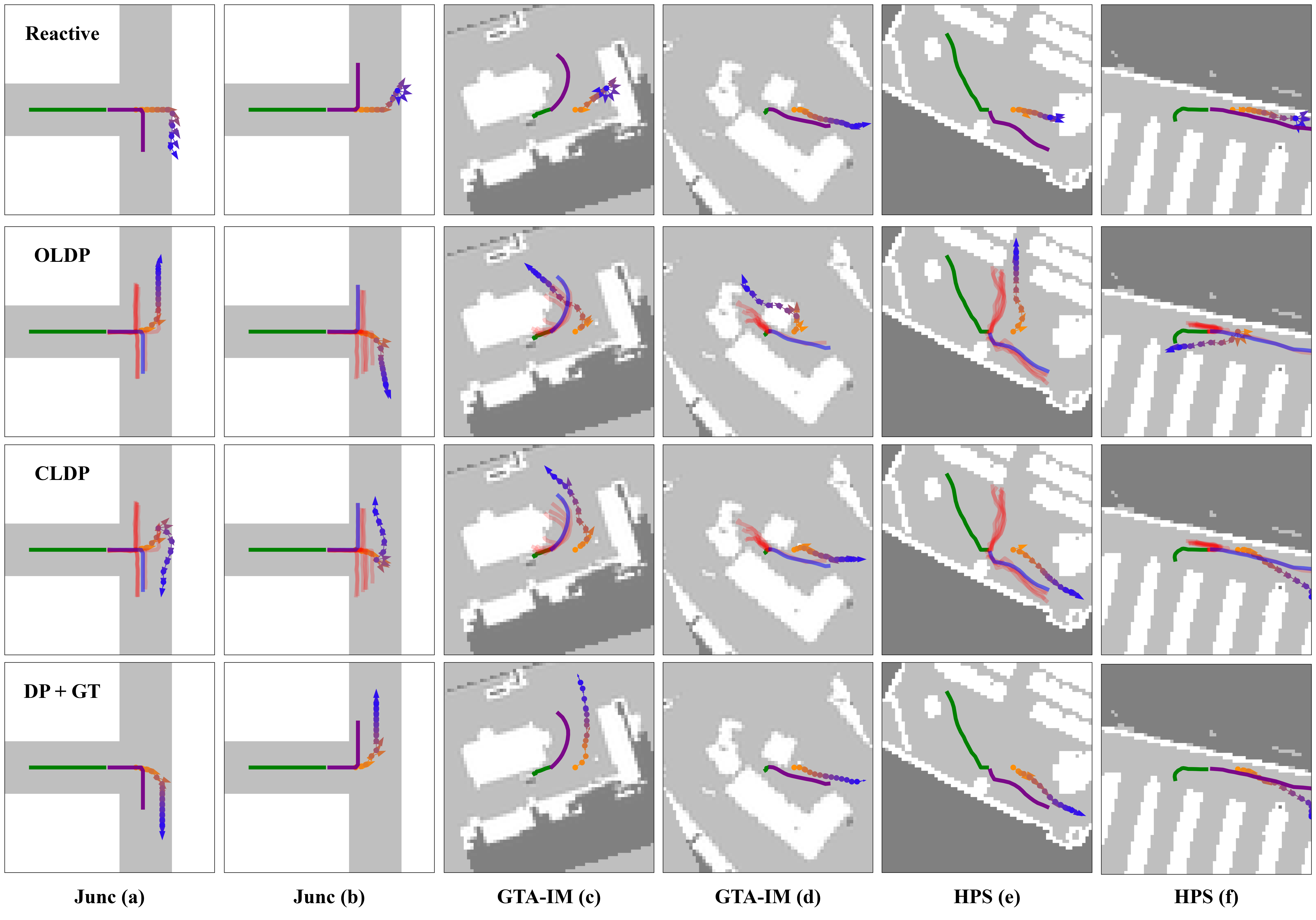

At a hallway junction, both left and right futures are plausible. A single mean trajectory blurs these possibilities and often leads to indecisive or unsafe motion. We need a planner that reasons over multiple likely futures, not one average future.

Diffusion and score intuition

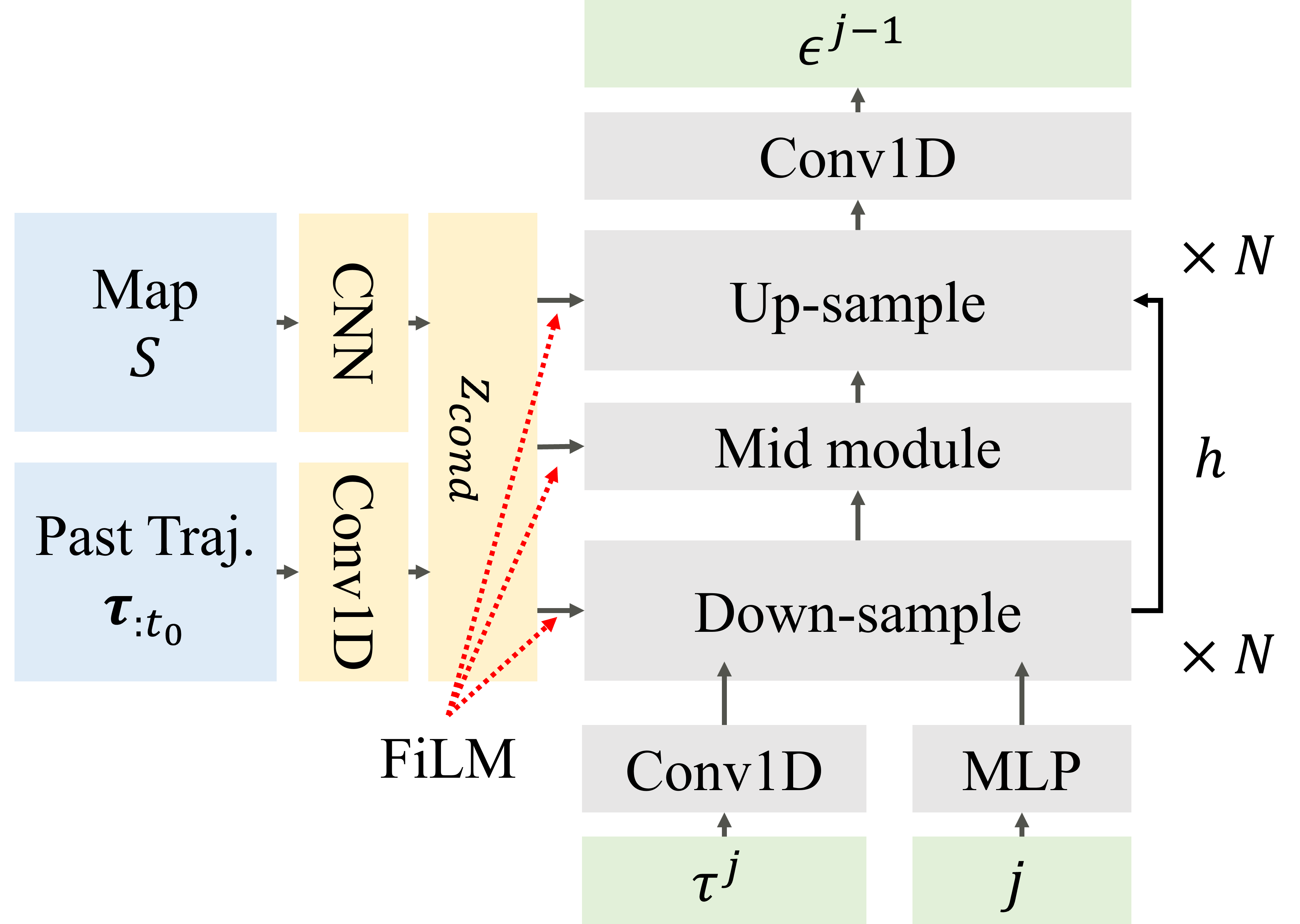

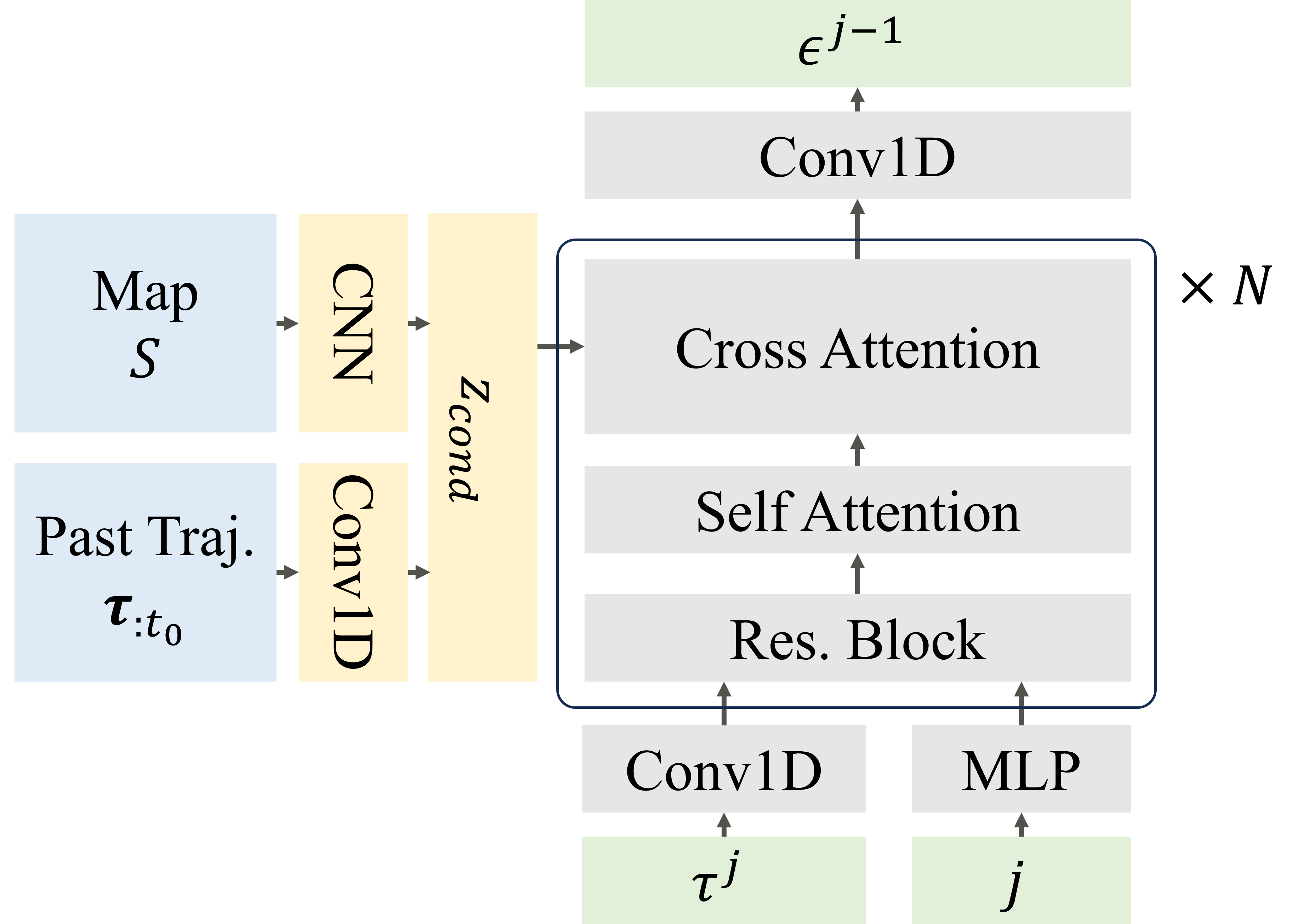

Diffusion-style modeling gives a practical way to represent complex multi-modal distributions: add noise in the forward direction, learn to denoise in the reverse direction. This lets us sample diverse candidate futures instead of collapsing to one guess.

From the score-based view, the model learns a vector field that points toward higher-probability regions. Sampling follows that field, gradually refining uncertain predictions into structured trajectories.

Closed-loop Diffusion Planning (CLDP)

CLDP combines sampled human futures with closed-loop robot planning. At each control cycle, the robot updates posterior weights over sampled futures and replans accordingly. Instead of committing once, it keeps correcting as new observations arrive.

What changes in practice

The key gain is not only better prediction quality, but better decision quality under uncertainty. The robot maintains front-facing behavior longer and adapts earlier when human intent becomes clear.